Is AI 2027 Coming True?

The state of AI in Q2 2026, part 1

A quick aside: I was recently interviewed on The Cognitive Revolution. In the first half, I discuss the suite of tools I’ve had Claude Code build to improve my personal productivity. In the second half, host Nathan Labenz and I get into a broader discussion of where AI is going. It was a fun conversation!

You’ve likely seen references to Claude Mythos, Anthropic’s most advanced model. It’s the first AI tool that is smart enough to be dangerous.

This is the latest in a series of data points suggesting that things may be progressing as forecast in AI 2027. Which is concerning, as that scenario involves a significant chance of human extinction by 2030!

I’m working on a review of the overall “state of AI”. This post started out as the first installment. As it unfolded, I realized that this question – is AI 2027 coming true? – was a unifying theme. I’ll cover other topics in another post or posts. If there’s anything you’d like me to discuss, leave a comment!

AI continues to barrel forward

Possibly my favorite tweet of the year so far is a poke at last year’s claims that progress was stalling out:

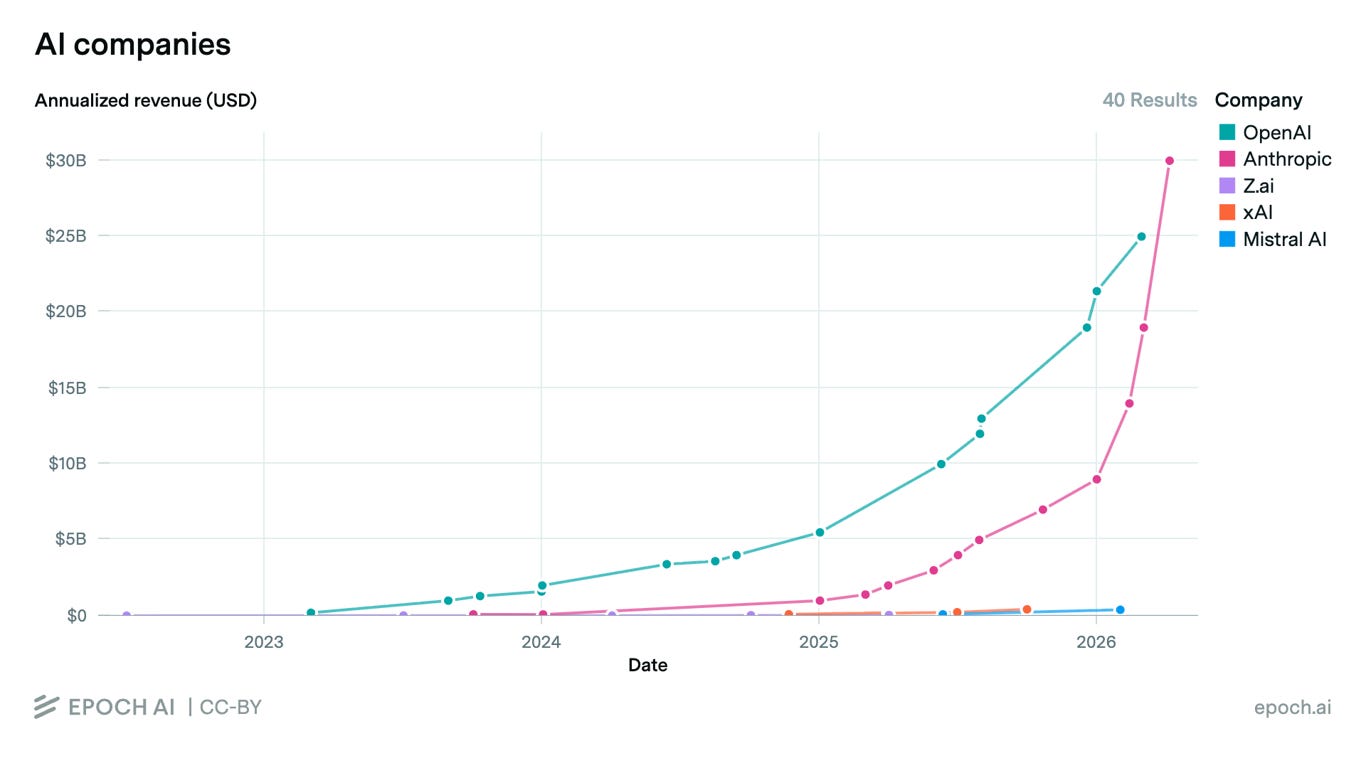

On every metric, AI continues up and to the right. The best measure of its importance might be people’s willingness to pay, and OpenAI revenue is absolutely exploding. Reportedly, their annual run rate quadrupled from $5.5B to $21.4B over the course of 2025, and then reached $25B in February. If OpenAI’s growth is lunatic, then Anthropic’s pace has been absolutely bananas. Revenue nearly dectupled over the course of 2025, and then accelerated, tripling to a $30B rate in just three months1:

For comparison, Anthropic’s revenue rate is now higher than McDonald’s, Paramount, Mastercard, Southwest Airlines, or Charles Schwab. Another tripling would put them in the neighborhood of Disney or Tesla. And that $30B figure (taken from an Anthropic announcement dated April 6) may already be out of date: As of April 24, the highly respected SemiAnalysis newsletter estimates Anthropic’s revenue rate at $40B/year.

Benchmark scores and computing capacity are continuing their upward trajectories as well. None of the predictions that AI will “hit a wall” have panned out so far. In fact, the evidence seems to point toward things picking up speed.

And I haven’t even gotten to Mythos yet.

Claude Mythos: the model the government doesn’t want you to have

Here’s cybersecurity researcher Nicholas Carlini, who joined Anthropic (the creators of Mythos) last year:

I’ve found more bugs in the last couple of weeks than I found in the rest of my life combined.

He’s referring, of course, to what he and his colleagues have accomplished using Mythos. And by “bugs”, he means security vulnerabilities in widely used software. Plenty has been written about Mythos since its announcement on April 7, so I’ll just recap a few key points:

Mythos is a far more capable hacker than any (known) previous model.

This was entirely an accidental side effect of training the model to be good at other tasks, such as programming.

These capabilities are so dangerous that Anthropic is delaying the release until major software developers have time to fix the bugs identified by Mythos. (Anthropic may also not have enough computing capacity to offer Mythos to the general public; I’ll discuss this in the next installment.)

So, while Mythos is not the first AI model with the potential to cause harm, it is the first that can cause harm because of how smart it is – smart enough to find “high-severity vulnerabilities … in every major operating system and web browser”.

There is debate as to whether Mythos is really as big a deal as claimed. My understanding: yes, it is a big fucking deal. Skeptics point out that open-source models can identify the same bugs as Mythos, but there are two flaws with their argument.

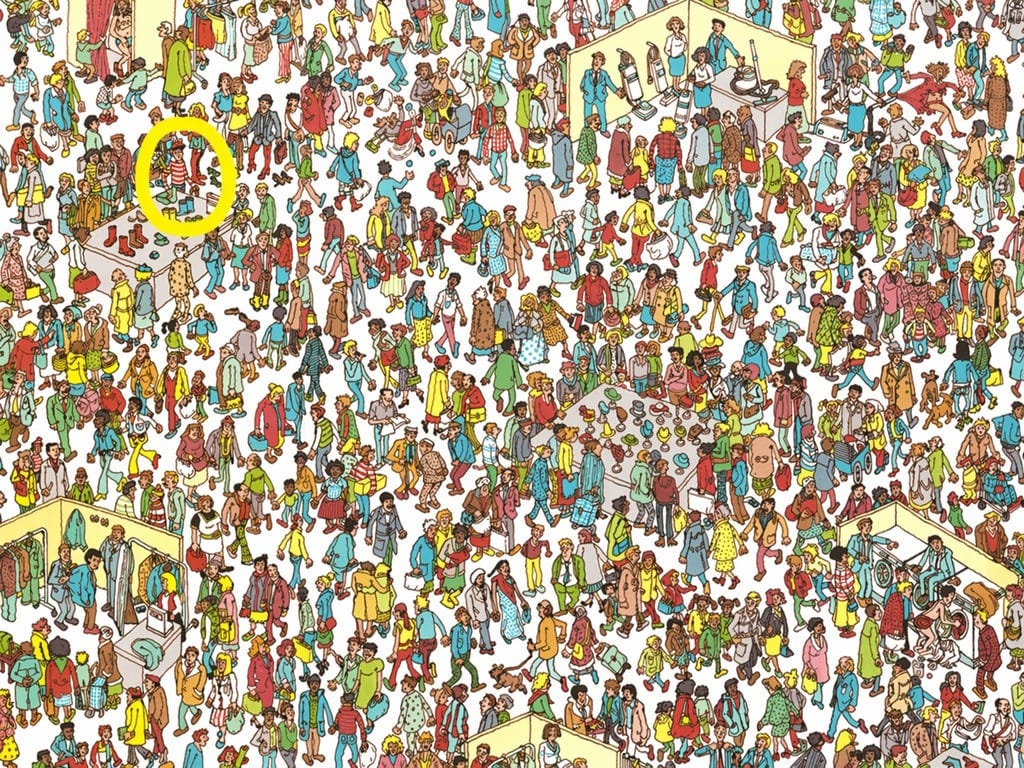

First: Mythos sifted through millions of lines of code in search of vulnerabilities. The people who used an open-source model to “reproduce” this work pointed the model at the precise snippet of code where Mythos had found a bug2. It’s easy to find Waldo if someone draws a big circle around him first.

Second: Mythos can do more than find bugs. It can chain them into exploits, and in some cases it can carry out a full-blown attack against realistic software systems. This is much more difficult, and much more useful for an attacker. In Star Wars terms:

Finding a bug: analyze the plans for the Death Star and notice the small thermal exhaust port that leads directly to the reactor system.

Crafting an exploit: piece together a workable plan of attack, incorporating the additional observations that the Death Star’s defenses “are designed around a direct large-scale assault” and aren’t tight enough to keep out an X-wing fighter; that the shaft is ray-shielded, but proton torpedoes can still penetrate; that X-wings do in fact carry proton torpedoes; and that an attacking ship can hide from the gun turrets by flying along the trench that leads up to the exhaust port.

Carrying out an attack: launch a swarm of X-wings with skilled pilots, navigate past the fixed defenses, successfully engage or avoid the defending TIE fighters, traverse the trench, organize wingmen so the lead pilot can focus on the exhaust port, and release a torpedo at just the right moment.

Existing models have pretty much been limited to step 1, finding bugs. Mythos is capable of finding a much larger number of bugs, has constructed working exploits for many of them (step 2), and gets farther than previous models in managing an end-to-end attack (step 3). It represents a sea change in cyber capabilities3.

In the very near term, Mythos looks like a net benefit to security. Under the name Project Glasswing, Anthropic is giving companies like Microsoft, Apple, the Linux Foundation, and Chase Bank a head start – granting early access to identify and correct bugs before an attacker can use Mythos to identify and exploit them.

What about the longer term? AI capabilities will progress very rapidly, and both attackers and defenders will take advantage. My guess is that the most professionally managed systems will wind up, on balance, more secure. But there are a lot of important systems in the world that are neglected, under-budgeted, or subject to excessive bureaucracy – and they may be in for a bad time. You don’t need a zero-day bug4 to break into a system like that, but you do need an army of mid-skilled hackers to find the vulnerable systems and figure out a specific way in. AI is going to democratize the hell out of that kind of work. Watch out for more cybercrime – for instance, attacks on schools and hospitals5.

Ordinary consumers might generally come out ahead, as our security depends primarily on device and service providers like Apple, Google, and Microsoft, who are well positioned to make good use of Mythos and its successors. But we may also be getting even more of those “your personal information may have been stolen” letters from the many other companies we do business with.

Meanwhile, consumers and businesses are rapidly adopting new AI-based tools. That increased pace of change brings increased opportunities for error. Time will tell how much risk we’re all willing to accept in return for access to the latest and greatest capabilities.

And of course I’ve only touched on one aspect of Mythos’ capabilities – its inadvertent skill at cyber attack. Early indications are that it is also a major advance in areas that Anthropic deliberately trained it for, such as coding. What does that tell us about overall progress?

Things are progressing on schedule – the crazy schedule

Broadly speaking, there are three views of AI progress:

Dismissive – AI is mostly hype. It will amount to, at best, a mildly useful tool for some applications.

Explosive – AI is heading toward “recursive self-improvement6”, after which it will bootstrap itself into an all-powerful technology that transforms the Universe, empowering and/or extinguishing humanity in the process.

It’s Complicated – AI is a big deal, but will take decades (or longer) to play out, and may hit a ceiling at some point.

It’s under-appreciated that the Explosive and It’s Complicated views do not diverge in their predictions until the advent of “strong AGI”.

The dismissive view is already ruled out. We’ve seen far too much evidence of AI providing dramatic value in the real world. A financial correction (“bubble pop”) is always possible, but it would be a temporary thing, like the dot-com crash: reflecting over-eager investment, not a flawed concept.

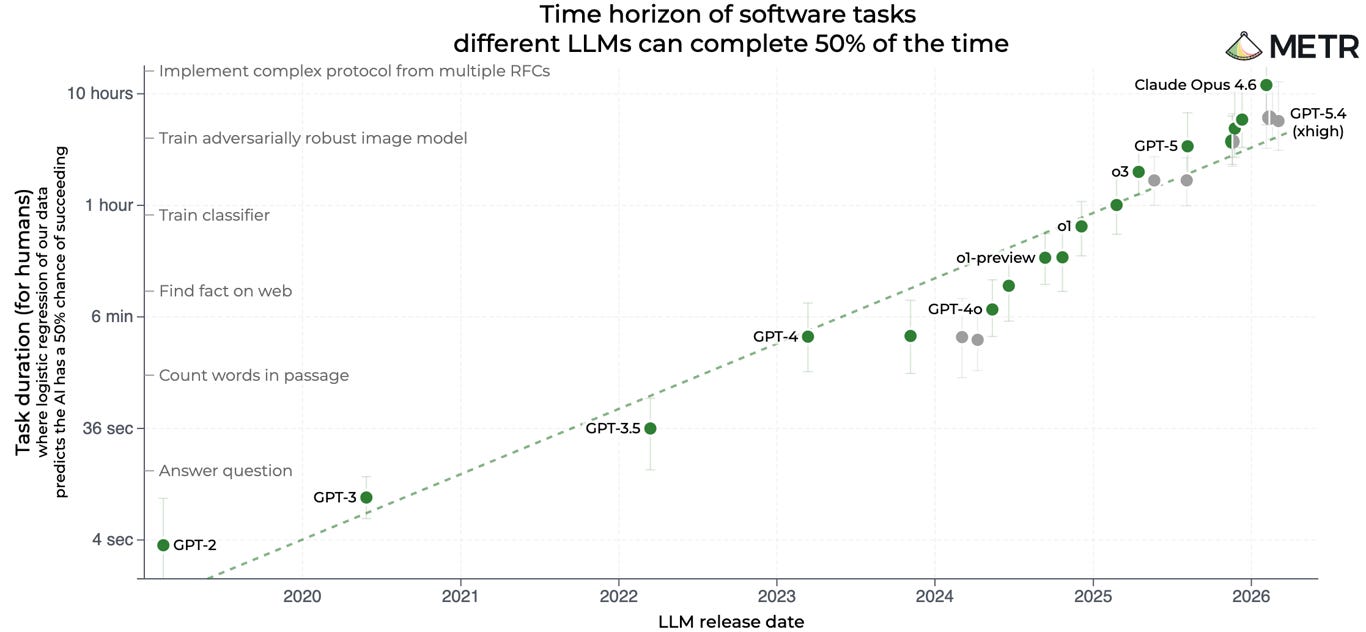

The last few years have provided a lot of evidence which appears to support the explosive view. AI progress has been remarkably fast, and remarkably predictable. Many AI trends, when plotted on appropriate axes, look like straight lines7 which point toward transformative change in the next few years:

This progress, being predictable, has in fact been predicted, notably in AI 2027 – the most famous articulation of the explosive view. (For an overview, see my writeup from last year). In the year since AI 2027 was published, events have played out more or less as portrayed. One contributor recently posted some thoughts about what they got right, and in February two of the lead authors graded the predictions for 2025. Accurate predictions include the emergence of powerful hacking abilities, military use of frontier AI models, and aggressive build-out of AI data centers.

This doesn’t mean that things will continue to progress on the AI 2027 timeline. It’s under-appreciated that the explosive and it’s-complicated views do not diverge in their predictions until the advent of “strong AGI”. It’s not unreasonable to take the leap in capabilities demonstrated by Claude Mythos as evidence for the explosive view. It’s also quite reasonable to believe that we’re on the It’s Complicated track. As I’ll explain below, the evidence is still ambiguous.

In fact, progress on quantitative measures has only been about 65% as fast as predicted in AI 2027. And I believe even this overstates progress, as I’ll explain in the next section8. But logic aside, early 2026 has felt explosive. It’s one thing to have an intellectual expectation that AI models would soon be able to write full-fledged applications and autonomously construct security exploits, and quite another to see it happening. I lean toward the It’s Complicated camp in the near term, but the visceral sensation of change has a way of making it hard to trust the arguments. Let’s review those arguments.

AI is still a normal technology, for now

If AI 2027 is the most famous expression of the Explosive view of AI, AI as Normal Technology is the canonical argument that It’s Complicated. I wrote an entire post about it. The key assertions:

AI’s impressive showing on benchmarks and coding will struggle to generalize to broader real-world settings.

Even as AI develops genuinely useful capabilities, there will be (or should be) delays and limits on how it is adopted in practice.

AIs will not develop radically superhuman abilities9, because the real world is often too complex for abstract genius to eliminate the need for trial and error.

In other words, while the authors agree that AI may be a monumentally important technology, they also believe that it will be subject to “normal” frictions that rule out the most aggressive scenarios and leave room for human agency. And indeed, even as new AI models keep racking up achievements, it’s still easy to find reminders of their limitations. A few recent examples:

In The Centaur Era, I noted serious errors committed by ChatGPT Pro and Claude Opus during a research project. For instance, Opus noticed that it had used a dramatically wrong figure as part of its analysis, but did nothing to correct the resulting incorrect conclusion.

Many people have predicted that AI would unleash a tsunami of sophisticated propaganda; this figures into the AI 2027 scenario. However, no tsunami has manifested; one of the authors recently acknowledged that “AI propaganda hasn’t been as effective as feared”.

It can be difficult for businesses to integrate AI into their work. In a recent report on Google Cloud’s Next conference, The Information reporter Erin Woo writes:

Last year at Google Cloud’s Next conference, executives touted the power of the company’s AI models for businesses. This year the theme is how to help companies use the models.

And with good reason: In interviews at the conference, customers and Google Cloud resellers told me that companies racing to adopt AI are hitting roadblocks. Some are still setting up their first AI agents, while others are struggling to manage a menagerie of them. [emphasis added]

Even mighty Mythos has limitations. As Anthropic reports:

…the model still cannot be left alone in a production environment... It frequently mistakes correlation with causation and it is not able to course-correct for different hypotheses. When asked to write incident retrospectives, more often than not it focuses on a single root cause and does not consider multiple contributing factors.

I mentioned that the authors of AI 2027 recently reported that things have been progressing about 65% as fast as they’d predicted. But I would argue that the most important component of their forecast is “AI software R&D uplift” (the extent to which AI is accelerating its own development). By their estimate, uplift is only 17% of what they’d predicted at this point. The 65% figure primarily reflects metrics that are not directly related to real-world AI capabilities, such as benchmark scores and data center construction. I should note however that frontier lab revenue is at 80% of prediction (in their scoring, which may in fact be conservative here).

As for qualitative predictions, some of the claimed “wins” are debatable. For instance, they cite evidence that “Bioweapon capabilities seem on track”, but we’re currently publishing a series of posts explaining that this may underestimate the complexity of bioweapon creation.

AI is undeniably racking up achievement after achievement. It is becoming superhuman in the scale of tasks it can undertake – Mythos does not seem to have done anything that a human expert could not have done given enough time, but it has identified many bugs that experts never got around to. At the same time, current systems still have many shortcomings compared to the range, breadth, and depth of human cognition, and have had limited impact so far. The question is whether that will last.

Where do things go from here?

If it seems like the rate of change is accelerating, that’s because it is. Get used to it: this staircase has no landings, there is no “new normal” for things to settle into. Rapid change is the new normal. The pace will likely continue to escalate.

AI is, for now, still a normal technology. As the AI as Normal Technology authors note, that “doesn’t mean mundane or predictable”; it means that its impact is limited by factors such as slow adoption. AI is advancing in capability and diffusing into the economy at a faster pace than most previous technologies, but that’s a difference in degree, not a difference in kind… for now, at least.

Here are a couple of possible future scenarios. In one, models really start to accelerate their own development over the course of 2026. There are already signs that this is happening:

Perhaps the most telling sign that OpenAI and Anthropic coding tools are accelerating AI R&D is this report that Google DeepMind engineers threatened to quit if they were required to use Google’s own product instead. As a result, the current blistering pace of progress may soon seem so slow as to almost be quaint, and AI will begin to have an unambiguous impact in various sectors of the economy, science, and society. That’s my conservative scenario!

The aggressive scenario follows the outline of AI 2027, though probably stretched out by a few years. AI fully automates its own development, and then quickly “solves” robotics and automates other critical functions. Barriers to adoption are swept away, through some combination of overwhelming competitive pressure and AI becoming flexible enough to adapt to the workplace rather than the workplace needing to adapt to it. Whatever comes next will be unrecognizable.

It’s hard to tell which path we’re on. Here are some of the key questions:

Will the rapid progress in AI coding ability lead to full automation of AI R&D, or are “softer” skills also needed?

Can the exponential growth of data center construction be sustained? If not, will progress in AI capabilities slow down as the tailwind of ever-increasing research compute budgets fades?

Would automated R&D result in rapid progress in AI capabilities across all domains, from scientific research to strategic planning? Or will advances still be bottlenecked on feedback from real-world deployment?

Will increasing AI capability and flexibility overcome traditional barriers to rapid adoption?

How quickly will robots “happen”?

I’m increasingly convinced that there’s a singularity coming somewhere in the next few decades; the questions above will determine when. I’m even more confident that the current, disorienting rate of change is the slowest we’re ever likely to see.

In subsequent installments of this series on the state of AI, I’ll cover topics such as competition between US and Chinese AI labs, data centers, impacts on the job market and elsewhere, and beneficial applications of AI. Use the comments to suggest additional topics!

Lots of people provided suggestions and feedback for this series! Thanks to Abi Olvera, Alex Booker, Clara Collier, Dave Kasten, Eli Pariser, Emma Kumleben, Gideon Lichfield, Kai Williams, Taren Stinebrickner-Kauffman, and Tim Schnabel. Apologies if I missed anyone!

Note that these figures do not necessarily imply that Anthropic’s business is now larger than OpenAI. There are important differences in how the two companies measure revenue, these figures are snapshots from different months, and some of the numbers are unofficial.

If you had the budget, you could use an open-source model to analyze all of the code that Mythos analyzed. However, you’d wind up drowning in “false positives”: the open-source models would likely find some of the bugs that Mythos found, but they’d also generate zillions of incorrect bug reports, and it would be difficult to separate the wheat from the chaff.

Benchmark results suggest that OpenAI’s GPT-5.5, which was released a couple of weeks after Mythos, may be its equal in cybersecurity capabilities. Other signals suggest that Mythos really is a step ahead and the benchmarks are missing something. Taking into account some private scuttlebutt, I lean toward the latter take.

A “zero-day bug” is a software flaw which the developer has known about for zero days, i.e. one which they have not yet had any time to fix (indeed they may not be aware of it at all).

For instance, “ransomware” attacks, in which a hacker “kidnaps” an organization’s computer systems by encrypting all of the data, and offering to remove the encryption in return for a payment, typically made in cryptocurrency.

Note that, while we may see an increase in successful cyberattacks, the impact may be limited by non-technical bottlenecks. See our report from last year, The Cybersecurity Equilibrium.

Recursive Self-Improvement refers to the idea that sufficiently advanced AI may accelerate its own development, potentially leading to runaway, explosive progress. See When AI Builds AI.

Some measures of AI capability actually seem to be accelerating of late, as indicated in a report by Epoch that I linked to earlier. The graph shown here reflects that accelerating trend.

The authors of AI 2027 also now believe things will move somewhat more slowly than in the published scenario, though they still think it’s quite possible that superintelligence will emerge within 18 months.

Of course AI systems are already superhuman in attributes such as speed and patience, and other software systems are superhuman at arithmetic, chess, and many other things. Here, when I raise the question of whether AI will develop radically superhuman abilities, I mean something like “the ability to figure things out that no person, given enough time, could have figured out”. For instance, designing a perfect drug without any trials in humans or even lab mice.

Thanks for this review! Some comments:

We co-authored an article with the "AI as Normal Technology" authors you might be interested in: https://asteriskmag.substack.com/p/common-ground-between-ai-2027-and

"one of the authors recently acknowledged that “AI propaganda hasn’t been as effective as feared”."

That was about my previous scenario, written in 2021, not about AI 2027. I don't think there's been less AI propaganda so far than depicted in AI 2027, because AI 2027 didn't really depict AI propaganda happening by mid 2026. (If you are interested in the previous scenario, it's called What 2026 Looks Like. https://www.lesswrong.com/posts/6Xgy6CAf2jqHhynHL/what-2026-looks-like)

"By their estimate, uplift is only 17% of what they’d predicted at this point. The 65% figure primarily reflects metrics that are not directly related to real-world AI capabilities, such as benchmark scores and data center construction. I should note however that frontier lab revenue is at 80% of prediction (in their scoring, which may in fact be conservative here)."

After we published that, frontier lab revenue has continued to increase and now is higher than AI 2027 predicted. This matters because frontier lab revenue is pretty directly related to real-world AI capabilities!

As for uplift: We actually think we're being pretty harsh on ourselves here. The reason it's only 17% is because at the time we wrote AI 2027 we thought uplift was higher than it actually was. In our writeup we say: "AI software R&D uplift is behind pace. This is primarily because we have updated our estimate of uplift in early 2025 downward, and thus our uplift estimates for the end of 2025 are similar to our original estimates for the start of AI 2027." So basically, the slope of the line is similar to what we expected, but the whole line is shifted down relative to what we expected. Very different from progress only being 17% as fast as we expected, which is a possible interpretation someone might have from just seeing that figure.